Vibe Coding Will Get You the Job. Engineering Will Keep It.

There is a moment every jazz musician knows. You sit in with real musicians — and within four bars, everyone in the room knows. Software engineering is the same. The production incident reveals it. The code review reveals it. Here's what the data says about where vibe coding ends and real engineering begins.

There is a moment every jazz musician knows. You have been playing for a year, maybe two. You have learned the scales. You have watched the YouTube tutorials. You can reproduce the licks. You sound, to the untrained ear, pretty good.

Then you sit in with real musicians.

And within four bars, everyone in the room knows.

Not because you played the wrong note. But because you do not *hear* the music the way they do. You are following a map. They are navigating by feel, by memory, by ten thousand hours of listening and failing and listening again. The difference is not in the notes. It is in what happens between them.

I have been thinking about this a lot lately, because the same thing is happening in software engineering right now — and almost nobody is talking about it honestly.

The Distinction That Will Define the Next Decade

In November 2025, Addy Osmani — Engineering Director at Google Chrome, one of the most respected voices in web engineering — published a piece that should be required reading for every developer, every hiring manager, and every student who has ever typed a prompt into Cursor and called themselves a coder.

His argument, stated plainly: vibe coding and AI-assisted engineering are not the same thing. They are not even close to the same thing. And conflating them is not just semantically sloppy — it is actively dangerous.

> "The big difference here is the human engineer remains firmly in control, responsible for the architecture, reviewing and understanding every line of AI-generated code, and ensuring the final product is secure, scalable, and maintainable."

>

> — Addy Osmani, Engineering Director, Google Chrome

Vibe coding, as coined by Andrej Karpathy, is the practice of fully surrendering to the AI's creative flow — high-level prompting, accepting suggestions without deep review, iterating fast, not worrying about what the code actually does underneath. It is genuinely useful for prototypes, weekend projects, MVPs, and learning. It flattens the learning curve. It democratizes creation.

But it is not engineering.

AI-assisted engineering is something else entirely. It is what happens when a senior engineer uses AI as a force multiplier — to generate boilerplate faster, to write initial test cases, to explore implementation options — while maintaining complete ownership of the architecture, the security model, the performance characteristics, and the long-term maintainability of every line that ships to production.

The 30% speed increase that FAANG teams report from AI tooling? That comes from the second approach, not the first. It comes from augmenting a rigorous process, not from abandoning one.

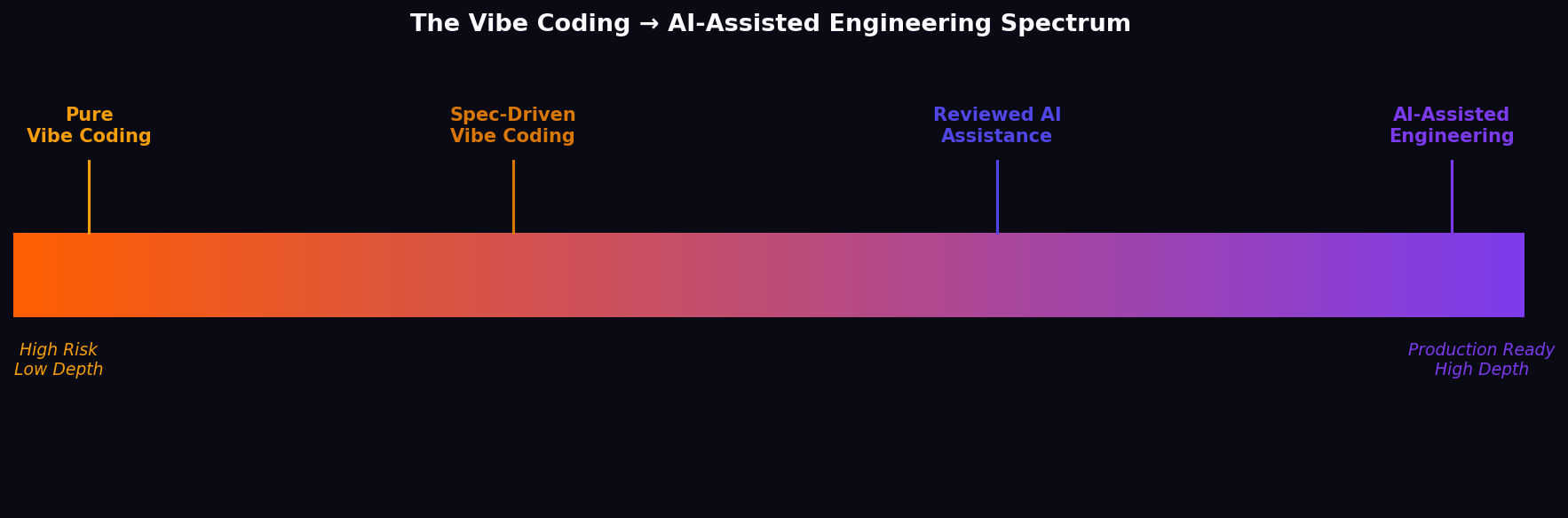

The Spectrum

There is a spectrum, and most people are arguing about the wrong end of it. On one end: pure vibe coding. No specs. No review. No tests. Accept everything the AI suggests. Ship it and see what happens. This is fine for a personal project. It is a liability in a production system.

On the other end: AI-assisted engineering. Detailed specifications written before a line of code is generated. Every AI output reviewed and understood by a human engineer. Test-driven development. Security review. Architecture owned by a person, not a prompt.

In the middle: a large, messy gray zone where most teams actually operate. The question is not which end of the spectrum is "right" — the question is: do you know where you are on it, and do you know the risks of where you are?

Most vibe coders do not. That is the problem.

The Data Is Already In. It Is Not Encouraging.

This is not theoretical. The production disasters are already happening.

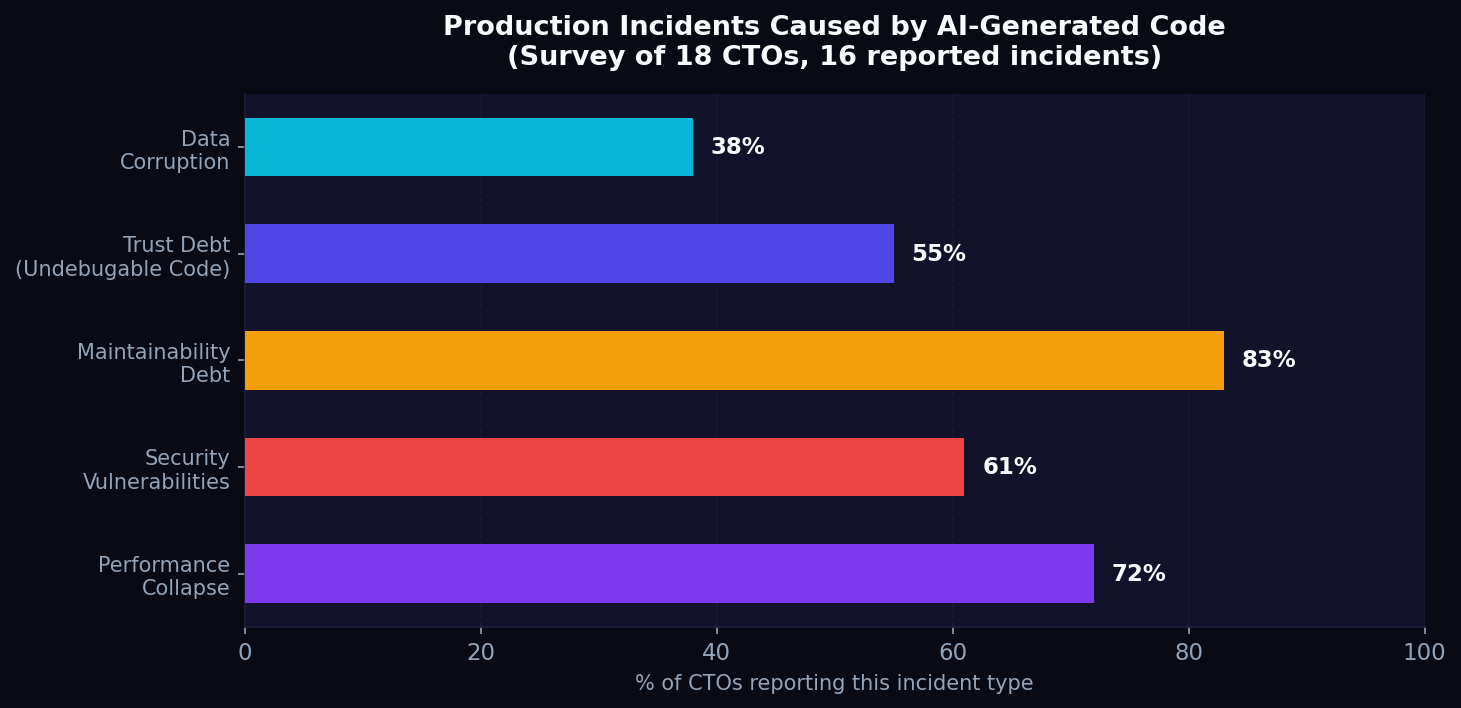

Osmani cites a survey of 18 CTOs. 16 of them reported experiencing production incidents directly caused by AI-generated code. Not prototypes. Not side projects. Production systems, serving real users, handling real data.

The failure modes are consistent: performance collapse (code that passes tests but degrades catastrophically under real load), security vulnerabilities (subtle injection vectors, improper auth flows, exposed secrets), maintainability debt (systems that "appear to work perfectly until they catastrophically fail"), and trust debt — teams that cannot debug their own codebase because the code was never understood, only accepted.

Brendan Humphreys, CTO of Canva, put it with the bluntness that only someone who has seen the wreckage can:

> "No, you won't be vibe coding your way to production — not if you prioritize quality, safety, security and long-term maintainability at scale."

And from the developer community, the phrase that has stuck: "This isn't engineering, it's hoping."

What This Means for Careers — And This Is Where I Get Blunt

I have interviewed at Google. I have interviewed at Meta. I have interviewed at Amazon. I have interviewed at more Fortune 100 and Fortune 500 companies than most people will apply to in a lifetime. I have not deleted a single email from my Gmail inbox in 20 years. I have seen what these companies look for, and I have seen what they find out.

Here is what I know: you cannot fake depth in a technical interview.

You can vibe code your way to a portfolio. You can use AI to generate a GitHub full of impressive-looking repositories. You can even, in some cases, use AI to help you through the early rounds of a hiring process. But at some point — in a system design round, in a debugging session, in a production incident at 2am — the depth of your understanding is tested. And if it is not there, it is not there.

The music analogy is not metaphor. It is literal. In jazz, you can practice your licks in your bedroom for years. But the moment you sit in with musicians who have real depth, you are found out. Not cruelly. Not maliciously. Just inevitably. The music reveals what you know and what you do not know, in real time, in front of everyone.

Software engineering is the same. The production incident reveals it. The code review reveals it. The architecture discussion reveals it. The moment someone asks you *why* you made a particular design decision and you have to say "the AI suggested it" — that is the moment.

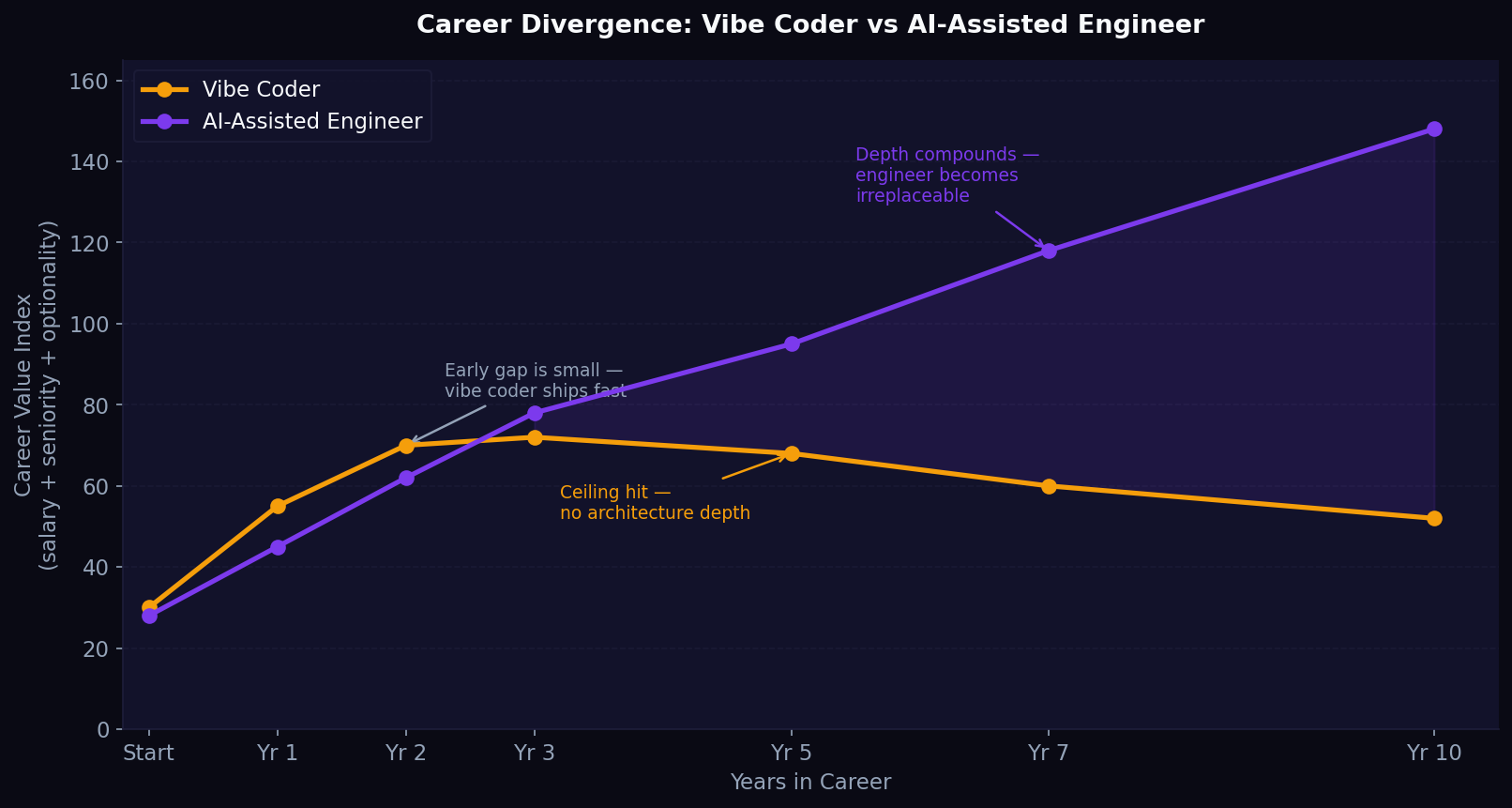

The Career Paths Are Diverging Right Now

Here is what I think happens over the next five years:

Vibe coders will get hired. The tooling has lowered the bar for entry-level output enough that many companies will onboard people who cannot explain their own code. This is already happening.

Some vibe coders will get promoted. They will be productive. They will ship features. They will look good in demos. Some will move into product management or project management, where the depth requirement is different.

Most vibe coders will eventually be found out. Not fired dramatically. Just quietly passed over. The senior engineer role will go to someone else. The architect title will go to someone else. The staff engineer path will close. And they will not always know why.

AI-assisted engineers will compound. Every year they use AI tools with genuine understanding, they get faster *and* deeper. The AI handles the mechanical work; the human handles the judgment. This is a compounding advantage. In five years, the gap between a vibe coder and an AI-assisted engineer with real depth will be larger than the gap between a junior and a senior engineer was in 2015.

The India Dimension

I want to say something specifically to the Indian engineers reading this, because this matters more for you than for almost anyone else.

India produces more engineering graduates than any country on earth. The competition for good jobs — in India, in the US, anywhere — is intense. The temptation to use AI to shortcut the hard work of actually learning is real and understandable.

But here is the thing: the companies that are hiring Indian engineers for serious roles are not hiring them for output volume. They are hiring them for depth. For the ability to sit with a hard problem, understand it completely, and build something that will not fall apart.

That reputation — Indian engineers who go deep, who understand systems, who can be trusted with critical infrastructure — is worth more than any shortcut. It took decades to build. It would take years of vibe coding to destroy.

The engineers I know who have built real careers at Google, Meta, Amazon — they are not the ones who shipped the most code in their first year. They are the ones who, when something broke at 2am, understood why.

That understanding is not something you can prompt your way to. It is built through years of reading code, writing code, breaking things, fixing them, and doing it again.

What AI-Assisted Engineering Actually Looks Like

For clarity, here is what the disciplined approach looks like in practice:

Spec first, code second. Before you generate a single line of AI code, write a technical design document. What is the system supposed to do? What are the failure modes? What are the security requirements? The AI is a much better collaborator when it has a clear brief.

Review everything. Every line of AI-generated code gets read by a human who understands it. Not skimmed. Read. If you cannot explain what a function does and why it does it that way, you do not ship it.

Test-driven development. Write the tests first — or at minimum, write them before you consider the feature complete. AI is excellent at generating test cases. Use it for that. But own the test strategy yourself.

Security is not optional. AI-generated code has a documented tendency to introduce subtle security vulnerabilities. Every AI-generated feature needs a security review. Not a vibe check. A review.

Own the architecture. The AI can suggest implementations. You decide the architecture. The data model, the service boundaries, the scaling strategy, the failure recovery — these are human decisions. They require judgment that comes from experience, not from a prompt.

The Honest Conclusion

Addy Osmani is right. The distinction matters. Not because vibe coding is bad — it is a genuinely useful tool for the right context. But because pretending it is the same as engineering is a lie that will cost people their careers, cost companies their systems, and cost the industry its credibility.

The musicians who last are not the ones who learned the fastest. They are the ones who went deep enough that the music became part of how they think. You can hear it in every note they play.

The engineers who last will be the same. Not the ones who shipped the most with AI. The ones who understood what they were building, why it was built that way, and what would happen if it broke.

That depth is not built by prompting. It is built by doing the work.

---

*Ajay Jetty is the founder of Jetty Train. He runs free daily AI training at [jettytrain.com/AI-Training](https://jettytrain.com/AI-Training) every weekday at 9am PST — covering AI certifications, interview prep, and how to build real engineering depth in the age of AI.*

Ready to get in the AI economy?

Evaluate AI, get paid up to ₹50,000/month, build your track record.